How LLMs decide who to cite: The mechanics behind AI Search visibility

Abstract

AI answer engines are shifting the unit of discovery from “ranked links” to “referenced evidence”. Instead of presenting ten blue links and letting the user choose, modern LLM experiences often synthesize an answer and attach a short list of citations. For marketers, this turns visibility into a selection problem: being retrieved, used, and credited inside the response.

This white paper explains the mechanics behind citation selection in LLM-based search experiences, grounded in public research and platform documentation. It clarifies where citation behavior comes from (retrieval-augmented generation and evidence selection under constraints), why citations can still be unreliable, which measurable factors most strongly shape citation outcomes, and how digital marketing and content teams can design assets and measurement systems that raise citation probability while protecting brand accuracy.

Why citations are becoming a primary visibility surface

The macro shift is not speculative

Multiple independent indicators suggest a real movement toward AI-mediated discovery:

- Gartner forecasts that traditional search engine volume will drop 25% by 2026 as AI chatbots and virtual agents absorb demand. (Gartner)

- Gartner also predicts that by 2028, brands’ organic search traffic will decrease 50% or more as consumers adopt generative AI-powered search experiences. (Gartner)

- Datos (reported by The Wall Street Journal) estimated that 5.6% of U.S. desktop browser search traffic went to LLM-based tools in June 2025, up from 2.48% a year earlier (desktop only). (The Wall Street Journal)

- Reuters Institute reporting on publisher strategy indicates strong concern that AI summaries and answer engines reduce referrals, and notes AI Overviews appearing at the top of about 10% of U.S. search results (as of early 2026 reporting). (Reuters Institute)

Market shift signals pointing to citation-first discovery

| Signal type | Metric | Value | Scope / caveat | Source |

|---|---|---|---|---|

| Forecast | Traditional search engine volume change by 2026 | -25% | Gartner prediction, directional planning signal | Gartner press release (Feb 19, 2024) |

| Forecast | Organic search traffic to brands by 2028 | -50% or more | Gartner prediction, assumes adoption of GenAI search | Gartner press release (Dec 14, 2023) |

| Observed behavior | Share of U.S. desktop browser searches going to AI chatbots (June 2025) | 5.6% | Desktop browser only, excludes mobile + apps, Datos dataset | WSJ reporting via PYMNTS (Jul 22, 2025) |

| Observed behavior | Same metric (June 2024) | 2.48% | Same scope and exclusions as above | WSJ reporting via PYMNTS (Jul 22, 2025) |

| Observed behavior | Same metric (Jan 2024) | <1.3% | Same scope and exclusions as above | WSJ reporting via PYMNTS (Jul 22, 2025) |

Taken together, the direction is consistent: more “answers” are being consumed without a traditional SERP click path, and the sources that appear inside the answer are gaining disproportionate influence.

Citation is not ranking, and that difference matters

Traditional SEO is primarily a ranking and click optimization loop:

- The system orders a large set of pages.

- Success is measured by impressions, rank position, and click-through rate.

- Authority and relevance are optimized to win the listing.

Citation visibility is an evidence selection loop:

- The system selects a small set of sources that can justify claims in a generated response.

- Success is measured by citation frequency, citation placement, and correctness of attribution.

- “Being the best page” is not sufficient if you are not retrievable, usable, and align with intent.

This distinction explains why teams often see a mismatch between “we rank well” and “we are not cited”. It is not necessarily a failure of authority. It is frequently a failure of retrieval inclusion, passage usability, intent alignment, or freshness fit.

Citations are a credibility surface, and the surface is still unreliable

Before discussing optimization, it is important to ground expectations: today’s citation systems can be wrong in ways that matter.

The Tow Center for Digital Journalism (Columbia Journalism Review) tested eight AI search engines for news citation behavior and found systematic failures, including incorrect attribution and unreliable linking behaviors. A widely reported figure from the study is that these systems failed to retrieve the correct information in over 60% of tests (in their evaluation setup). (Columbia Journalism Review)

In practice, this shifts ‘AI visibility’ from a one-time optimization exercise to a monitoring discipline, where teams track not only whether they appear, but whether they are cited accurately. Seerly’s monitoring layer is built specifically around this distinction.

For brands, this is not an academic detail. It changes what “winning citations” means:

- You want visibility, but you also need correctness.

- A citation that points to the wrong page, wrong claim, or wrong brand category can create reputational and conversion damage.

- Monitoring citations is not optional if AI answers become a top-of-funnel channel.

A credible AI visibility strategy must therefore include both:

- increasing citation probability, and

- increasing the probability that the citation is correct and context-appropriate.

Tow Center evaluation snapshot (news attribution task)

| What was tested | How it was tested | Scale | Key outcomes reported | Source |

|---|---|---|---|---|

| Eight generative search tools with live search features | Excerpt-to-article identification (headline, publisher, date, URL) using passages from known news articles | 1,600 queries (20 publishers × 10 articles × 8 chatbots) | Tools collectively provided incorrect answers to more than 60% of queries; Perplexity had 37% incorrect responses, Grok 3 had 94% incorrect responses | Columbia Journalism Review (Tow Center), Mar 6, 2025 |

| ChatGPT Search behavior in same test | Same protocol, 200 prompts per system | 200 prompts for ChatGPT | ChatGPT incorrectly identified 134 articles, signaled low confidence only 15 times, and never declined to answer | Columbia Journalism Review (Tow Center), Mar 6, 2025 |

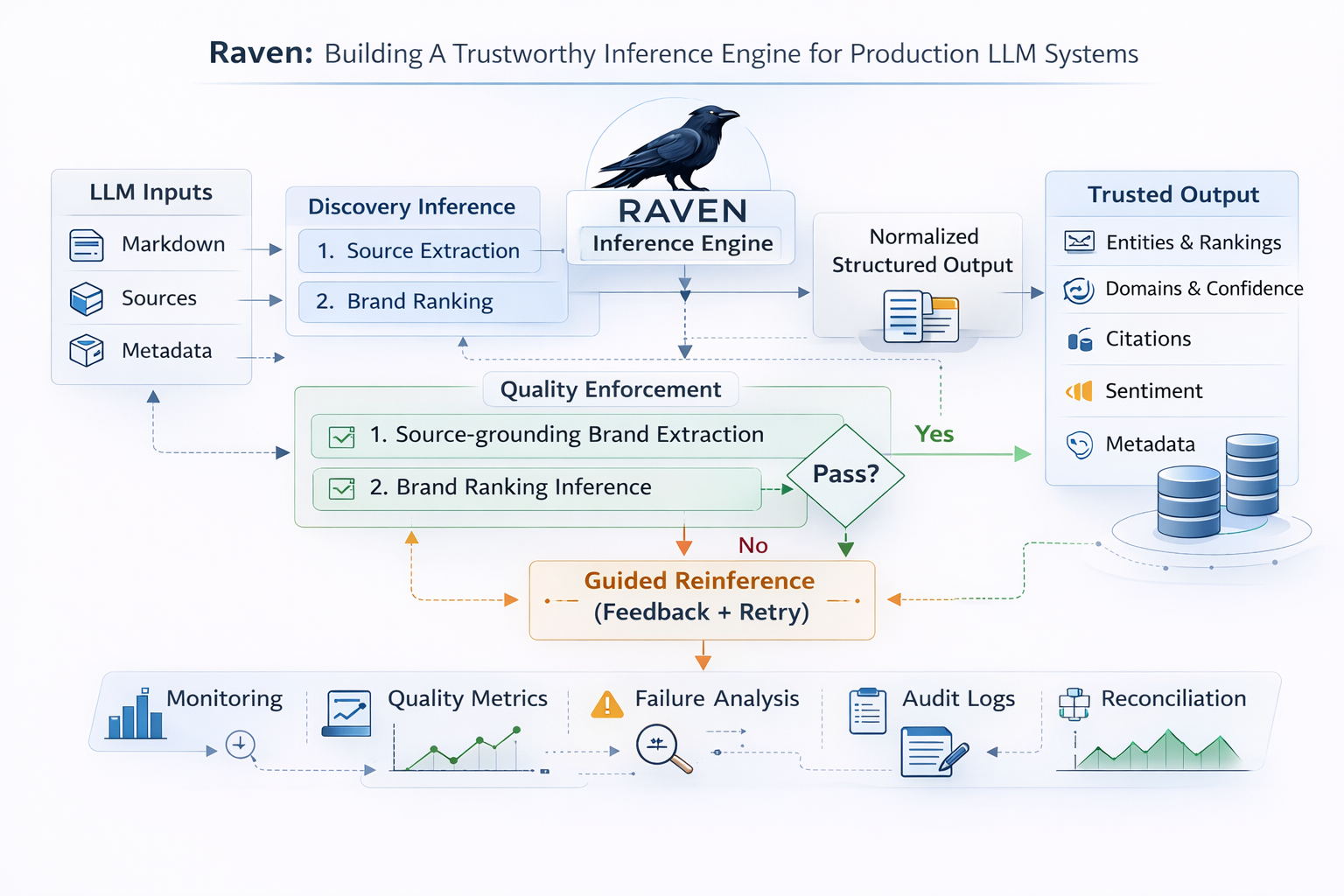

How LLM citation selection works in practice

Most citation-enabled LLM experiences are implemented as retrieval-augmented systems, often described under the umbrella of Retrieval-Augmented Generation (RAG). RAG is widely studied as a method to reduce hallucination and improve traceability by retrieving external documents and grounding outputs in that evidence. (arXiv)

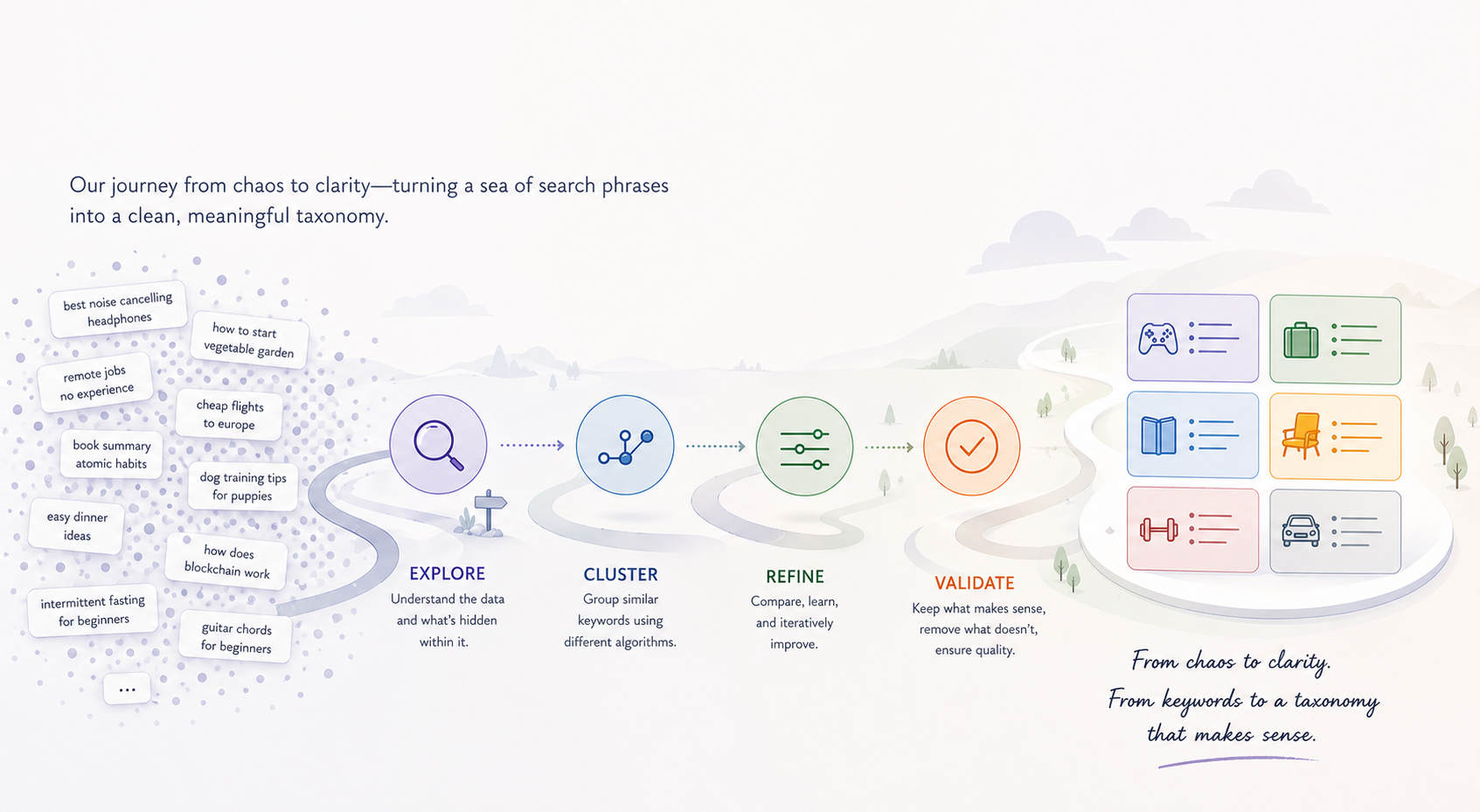

At a high level, citation selection emerges from a pipeline with four separable stages:

Stage A: Intent interpretation and query rewriting

The system first determines:

- what the user is asking (definition, comparison, how-to, evaluation, “latest”),

- what evidence class is appropriate (official docs, third-party reviews, news, research),

- which sub-questions need to be answered.

This stage matters because intent classification can change the entire retrieval footprint. A “what is” prompt tends to pull canonical definitions. A “is it reliable” prompt tends to pull validation, criticism, benchmarks, or independent third-party coverage.

Stage B: Candidate retrieval (the gate)

Next, the system retrieves a bounded candidate set from one or more sources: web search APIs, internal indexes, curated corpora, embedding-based retrieval systems, or vertical sources.

A key constraint is computational: the model cannot deeply evaluate hundreds or thousands of pages in real time. Most systems operate with a limited candidate set and limited context window. That means:

- If your content is not consistently retrieved into the candidate set, it will not be cited.

- Small changes in candidate ordering can have large downstream effects because only a small number of sources will ultimately be attached as citations.

Stage C: Passage-level usefulness scoring

Even when your page is retrieved, citation often depends on whether the system finds extractable passages that support claims cleanly.

This is where many marketing assets fail. A page can be persuasive yet unciteable because it lacks:

- crisp definitional statements,

- scoped claims,

- structured comparisons,

- explicit methodology,

- unambiguous data with context.

RAG literature emphasizes that system quality depends not only on retrieval but also on how information is selected and integrated into generation. (arXiv)

Stage D: Answer composition and citation attachment

Finally, the model composes the response and attaches citations to the claims or sections that rely on retrieved material.

In products like ChatGPT Search, citations are explicitly surfaced as inline references and source links when web search is used. (OpenAI Help Center)

Citation attachment is therefore not a separate “ranking layer”. It is the visible artifact of which sources were retrieved, deemed useful, and used during answer construction.

--

Because citation is downstream of retrieval and passage usability, measurement needs to separate these layers. Seerly’s reporting intentionally mirrors this pipeline so teams can diagnose whether a miss is retrieval, usability, or intent mismatch.

The measurable drivers of “who gets cited”

This section focuses on drivers that are mechanically implied by retrieval-augmented systems and consistent with observed industry behavior. Where claims are based on public research, citations are provided. Where claims are best-practice inference, they are labeled as such.

Citation mechanics map (from system stage to marketing lever)

| System stage | What the system is doing | What typically decides outcomes | What teams can influence |

|---|---|---|---|

| Intent interpretation | Classifies what the user wants (definition, comparison, how-to, skeptical, latest) | Query framing, safety constraints, evidence norms | Intent coverage strategy, canonical phrasing, content types by funnel stage |

| Candidate retrieval | Pulls a bounded set of documents from indexes/tools | Crawlability, indexing, query-match, retrieval ranking | Technical SEO, crawl access, internal linking, canonicalization, speed, structured titles/headers |

| Passage selection | Chooses cite-able spans that support claims | Specificity, clarity, proximity of claim and evidence, formatting | Reference-grade writing, answer-first structure, tables/bullets, scoped claims, definitions |

| Answer + citation attachment | Writes synthesis and attaches sources | Trust tiering, redundancy across sources, source-type fit | Trust assets (methodology, limitations, compliance), third-party validation, consistent terminology |

Retrieval inclusion and prominence

Claim (mechanistic): Being retrieved into the candidate set is the strongest prerequisite for citation, and earlier candidates are more likely to be used.

Why it is true: Bounded context and time constraints limit how many sources are read and used. RAG surveys describe retrieval as foundational, and downstream stages cannot use what is not retrieved. (arXiv)

Practical implications for teams:

- You must optimize for the retrieval surfaces that your market actually uses, not only for classic Google rankings.

- Crawlability, response speed, canonicalization, and content accessibility become more than technical hygiene. They become visibility gates.

Source trust and source-type fit

Claim (research-grounded + mechanistic): Systems tend to prefer sources that reduce risk of misinformation and attribution errors, and they often prefer different source types depending on intent.

Grounding: The Tow Center findings highlight that citation reliability is a problem, which increases the incentive for systems to privilege sources perceived as more reliable, especially on sensitive queries. (Columbia Journalism Review)

Inference (explicit): As systems mature, trust tiering becomes more prominent, particularly for YMYL-adjacent topics and evaluative prompts. For B2B SaaS, this often shows up as preference for recognizable third-party coverage and documentation over vendor marketing pages for certain intents.

Practical implications:

- Treat “trust assets” as first-class content: editorial standards pages, methodology pages, transparent ownership, security and compliance posture, and changelogs for frequently updated facts.

- Earn third-party validation that is not self-authored. In evaluative prompts, third-party sources often serve as the credibility substrate.

Passage-level specificity and cite-ability

Claim (mechanistic): Citations attach to claims, and claim support is easier when content is explicit, structured, and passage-level unambiguous.

Practical implications:

- Put the answer early, then expand.

- Use definitional blocks, comparisons, and scoped statements that a system can quote without interpretation.

- Avoid ambiguous superlatives (“best”, “leading”) without evidence, because they are difficult to cite responsibly.

Freshness and update signaling

Claim (research-grounded + mechanistic): Time-sensitive prompts increase preference for recently updated sources. This amplifies the penalty of stale pages in fast-moving categories.

Grounding: The publisher traffic concern and AI Overviews expansion reported by Reuters Institute reflects how generative summaries change click behavior and source exposure, which raises the stakes of being current on queries where “latest” matters. (Reuters Institute)

Practical implications:

- Maintain canonical “facts pages” with meaningful updates: pricing, feature matrices, integration docs, benchmarks, and definitions.

- Avoid “fake freshness” (superficial date changes). Systems that detect thin updates may discount the signal, and users will lose trust.

Query framing and run-to-run variance

Claim (mechanistic): Small changes in wording can change retrieval results and therefore citations, even when the underlying topic is the same.

Why it is true: The retrieval stage is sensitive to phrasing. In addition, generation can be stochastic depending on decoding settings, especially in consumer products that balance creativity and latency.

Note: The internal determinism of a forward pass is not the user reality. Many systems use sampling, and citation selection is also affected by real-time retrieval variance and index updates.

Practical implication: You should measure visibility across a prompt set, not a single prompt, and track distributions over time.

The Citation Visibility Model

For digital marketing experts, it helps to separate the problem into three layers with distinct levers:

Layer 1: Retrieval eligibility

Can the system access and retrieve your content?

- crawlability, render strategy, canonical structure, response speed, robots rules

Layer 2: Evidence usability

Can the system use your content as support for claims?

- specificity, structure, definitional clarity, tables, scoped comparisons, methodology transparency

Layer 3: Intent match and trust

Is your content the “right type” of evidence for the user’s intent?

- official docs vs third-party reviews vs news vs academic sources

- trust assets, independent validation, editorial posture, updates

Most teams over-invest in Layer 3 “authority narratives” and under-invest in Layer 1 and Layer 2 mechanics. In LLM citation systems, that is often the wrong allocation.

What actually works: an operational playbook

This section is written to be executed by senior SEO, content strategy, and technical marketing teams. It avoids vague advice and focuses on concrete deliverables.

Intent-to-asset matrix for citation coverage

| Buyer intent class | Example prompt | Evidence type LLMs tend to prefer | Asset you should maintain | What “good” looks like |

|---|---|---|---|---|

| Definition | “What is Seerly?” | Official canonical definitions | Product definition page | Clear scope, category placement, what it is and is not, stable URL |

| Mechanism | “How does Seerly measure AI visibility?” | Methodology and technical explanation | Methodology + “how it works” page | Explicit inputs/outputs, evaluation approach, limitations, examples |

| Implementation | “How do I set up tracking?” | Documentation and stepwise guidance | Docs, quickstarts, integration guides | SSR-accessible content, numbered steps, troubleshooting, screenshots optional |

| Comparison | “Seerly vs X” | Independent comparisons, constraint-based analysis | Comparison pages + enable third-party reviews | Feature tables, constraints, neutral tone, avoids hype claims |

| Evaluation / proof | “Does AI visibility tracking work?” | Case studies, benchmarks, third-party validation | Case studies + benchmarks | Baselines, outcomes, context, methodology, reproducibility notes |

| Skeptical / risk | “Is Seerly reliable?” | Trust and governance artifacts | Security, privacy, compliance, limitations | Clear policies, data handling, audit posture, explicit limitations |

| Freshness-sensitive | “What changed recently?” | Recent updates and changelogs | Changelog, release notes, updated canonical pages | Real updates, dates match substance, canonical references |

Build a “citation-ready” canonical set

Create a small set of pages designed to be referenced. For B2B SaaS, a credible baseline is:

-

Definition page What the product is, who it is for, what it is not, and clear category placement.

-

Mechanism and methodology page How it works, inputs, outputs, limitations, and measurement methodology.

-

Integration documentation Setup steps, APIs, SDKs, data flows, and troubleshooting.

-

Comparison pages Constraint-based comparisons, written to support buyer decisions rather than listicles.

-

Proof assets Case studies with measurable outcomes, context, and constraints. Avoid cherry-picked claims without baseline context.

-

Trust assets Security and privacy posture, compliance statements, data handling, editorial standards, and update policies.

These pages should be stable, internally linked, and updated meaningfully when facts change.

Engineer cite-ability at the passage level

A practical pattern that repeatedly improves reuse as evidence:

-

A short “answer block” near the top with:

- definition,

- scope,

- one or two explicit claims,

- and a link to methodology or docs.

-

Then detail sections that map to user prompts:

- “How it works”

- “What we measure”

- “How accuracy is validated”

- “Limitations”

- “Examples”

Also adopt consistent naming for core concepts. LLMs and retrieval systems handle synonyms, but ambiguity still increases wrong citations and wrong brand associations.

Make content retrievable in constrained pipelines

From a technical perspective, citation visibility often correlates with boring fundamentals:

- Ensure important copy and tables are available to crawlers without requiring heavy client execution.

- Avoid fragile canonicalization across marketing pages, docs, and blog subdomains.

- Keep response times low for first byte and page render, because some retrieval systems have strict timeouts.

- Expose clean structured navigation and internal linking between canonical pages.

This is not “technical SEO as tradition”. It is “technical accessibility as retrieval eligibility”.

Cover intent classes deliberately

You cannot win citations across the funnel with a single page. Build around intent:

- Definition intent: official definition and category placement

- Implementation intent: docs, quickstarts, and troubleshooting

- Evaluation intent: proof, benchmarks, third-party validation

- Comparison intent: neutral comparison pages and independent sources

- Skeptical intent: limitations, risks, governance, and criticism handling

- Trend intent: current research notes, changelogs, and timely updates

This aligns your asset types to the evidence types an answer engine is likely to prefer.

Optimize for correctness, not just inclusion

Given the documented failure rates in citation accuracy for news-style queries, it is safer to assume that citation errors will occur and build mitigation:

- Use canonical definitions that third parties can cite.

- Maintain consistent terminology across product pages, docs, and announcements.

- Provide unambiguous “single source of truth” pages for pricing, features, and claims that are frequently repeated.

- Monitor AI answers for incorrect attribution and correct the underlying ambiguity.

The Tow Center findings make it clear that citation UX can project confidence even when the underlying attribution is wrong. (Columbia Journalism Review)

A practical standard is to track attribution correctness as a first-class metric: whether the citation points to the right URL, supports the right claim, and uses current information. Seerly includes automated checks and sampling workflows to make this measurable.

Measurement: what a credible team should track

To make citation visibility measurable, teams need a repeatable test harness: a stable prompt library, repeated runs over time, and metrics that separate visibility from correctness. Single examples can be illustrative, but they cannot support conclusions or guide prioritization.

Build a prompt set, not a single prompt

Define a prompt set across:

- intents (definition, comparison, how-to, evaluation, latest),

- personas (buyer, practitioner, analyst),

- and query formulations (short, detailed, skeptical).

Store the prompt set, version it, and only change it deliberately, otherwise trend lines become meaningless.

Measure three outputs, not one

-

Retrieval presence (where possible) If your tooling can infer whether a domain is appearing in candidate sets, track it. If not, proxy via consistent citation presence across repeated runs.

-

Citation frequency and placement Track how often you are cited and whether you appear as a primary or secondary source.

-

Attribution correctness Track whether the answer uses you to support correct claims, links to the correct page, and represents your product category accurately.

AI citation visibility measurement template

| Metric | Definition | How to measure | Why it matters | Common failure mode |

|---|---|---|---|---|

| Citation frequency | % of prompt runs where your domain is cited | Run a fixed prompt set repeatedly and log citations | Primary visibility outcome | Measuring a single prompt and overfitting to it |

| Citation placement | Whether you appear as primary citation vs secondary | Track ordering or prominence in citations list | Higher placement tends to drive more trust and clicks | Ignoring placement and treating all citations as equal |

| Attribution correctness | Whether the citation points to the correct page and supports the correct claim | Human spot-check sampled runs, or rules-based validation | Prevents harmful misrepresentation | Assuming citations imply accuracy by default |

| Intent coverage score | % of intent classes where you have a canonical, cite-able asset | Map prompts to intents and score coverage | Prevents “we only show up for brand queries” | One-page strategy (product page as everything) |

| Freshness compliance | % of canonical pages updated when facts changed | Track update cadence and change logs | Prevents stale-page substitution in time-sensitive prompts | Fake freshness (date updates without substance) |

Implementation note: Several teams operationalize these metrics using a dedicated citation monitoring workflow (prompt libraries, scheduled runs, citation diffs, and correctness review). Seerly provides an off-the-shelf implementation of this approach.

Design for variance

Because retrieval indexes update and generation can vary, measure distributions:

- Run the same prompt multiple times across multiple days.

- Track the spread, not just the mean.

- Treat sudden shifts as signals to investigate changes in retrieval inclusion, page updates, or competing sources.

Limits, risks, and what this paper does not claim

A credible stance requires explicit boundaries:

- There is no single universal “citation score”. Different systems use different retrieval stacks and trust heuristics.

- Citations are not always evidence of endorsement. They are evidence of use as support for a claim.

- Some categories are inherently volatile: news, pricing, and fast-changing product comparisons.

- Citation correctness remains a known weakness in the industry, particularly for attribution in news-like scenarios. (Columbia Journalism Review)

This does not make citation optimization pointless. It means the work must be approached as an engineering discipline with monitoring and continuous correction.

Conclusion

Citations are becoming a dominant discovery surface as AI answer engines capture more user attention and increasingly compress the click path. Forecasts from Gartner describe a significant shift in search behavior and organic traffic dynamics over the next few years. (Gartner) At the same time, independent evaluation shows that AI citation behavior can be unreliable and error-prone, which forces brands to think beyond visibility and include correctness. (Columbia Journalism Review)

The mechanics behind “who gets cited” are best understood through the retrieval-augmented lens: citations are a downstream outcome of retrieval eligibility, evidence usability, and intent-aligned trust selection. RAG research frames retrieval and grounding as the core pathway to reducing hallucination and increasing traceability, which maps directly onto why cite-able content wins. (arXiv)

For digital marketing teams, the practical playbook is clear:

- Engineer retrieval eligibility through technical accessibility and stable canonical structures.

- Engineer cite-ability through passage-level clarity, structure, and unambiguous claims.

- Engineer trust and intent fit through asset types that match how buyers ask questions.

- Measure citation visibility as a distribution, and monitor correctness continuously.

That is the durable path to AI search visibility that holds up under scrutiny, rather than a collection of prompt tricks that collapse when the model, index, or UI shifts.

Teams that want to operationalize this typically start by building a repeatable prompt set, running it on a cadence, and tracking citation frequency and attribution correctness, which is the workflow Seerly is designed to support.